I/usr/include/uuid -DNDEBUG -ggdb -O2 -pipe -Wall Gcc -DHAVE_CONFIG_H -DSYSTEM_WGETRC="/etc/wgetrc" Path, as it exceeds 256 chars soon due to the long names, and neitherįirefox nor Chrome can open file paths > 256 chars in Windows xD Note 2: in Windows, make sure to put the download to a short directory Note 1: the -domains list was built by looking at the result of command Totally unusable as the main CSS is missing. If you open the page offline (without anything cached!), it renders To the online page (!) althought it was downloaded locally and is needed " Result: after everything has finished, the link is altered to refer (reject only the forum and the wiki edit/history pages)

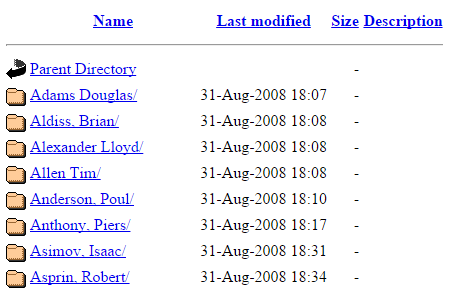

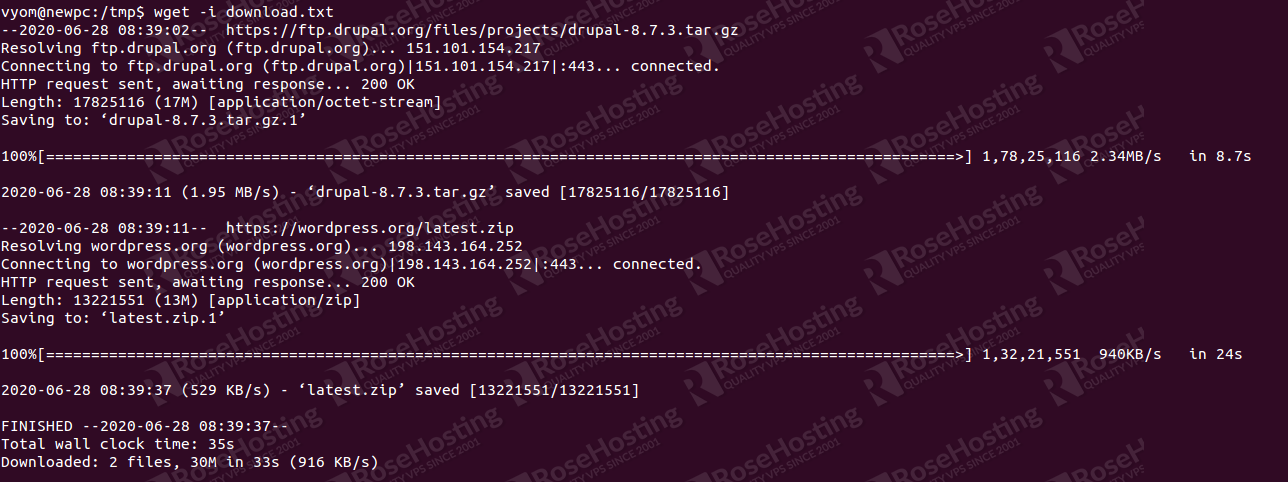

Run wget to load recursive but less limits in -reject-regex Attention: this downloads about 3300 Pages / 8500 Files! Result: a fully working local version inģa. Wget -recursive -no-clobber -page-requisites -html-extension run wget to load recursive but limit it with -reject-regex to very \index.html, the link element is replacedĢa. convert-links -restrict-file-names=windows -span-hostsġb. Wget -no-clobber -page-requisites -html-extension run wget to load a single page using -page-requisites: Updated if the download is limited to a few pages.ġa. I do have the following Problem: The Pageĭuring a single page download the link is updated with the local file,ĭuring a recursive download the file is downloaded, but the link is only

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed